Multiagent Reinforcement Learning-Based Adaptive Sampling for Conformational Dynamics of Proteins

BibTeX

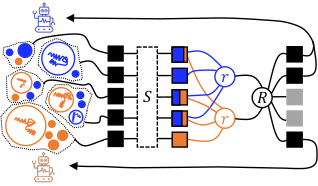

Machine learning is increasingly applied to improve the efficiency and accuracy of molecular dynamics (MD) simulations. Although the growth of distributed computer clusters has allowed researchers to obtain higher amounts of data, unbiased MD simulations have difficulty sampling rare states, even under massively parallel adaptive sampling schemes. To address this issue, several algorithms inspired by reinforcement learning (RL) have arisen to promote exploration of the slow collective variables (CVs) of complex systems. Nonetheless, most of these algorithms are not well-suited to leverage the information gained by simultaneously sampling a system from different initial states (e.g., a protein in different conformations associated with distinct functional states). To fill this gap, we propose two algorithms inspired by multiagent RL that extend the functionality of closely related techniques (REAP and TSLC) to situations where the sampling can be accelerated by learning from different regions of the energy landscape through coordinated agents. Essentially, the algorithms work by remembering which agent discovered each conformation and sharing this information with others at the action-space discretization step. A stakes function is introduced to modulate how different agents sense rewards from discovered states of the system. The consequences are three-fold: (i) agents learn to prioritize CVs using only relevant data, (ii) redundant exploration is reduced, and (iii) agents that obtain higher stakes are assigned more actions. We compare our algorithm with other adaptive sampling techniques (least counts, REAP, TSLC, and AdaptiveBandit) to show and rationalize the gain in performance.